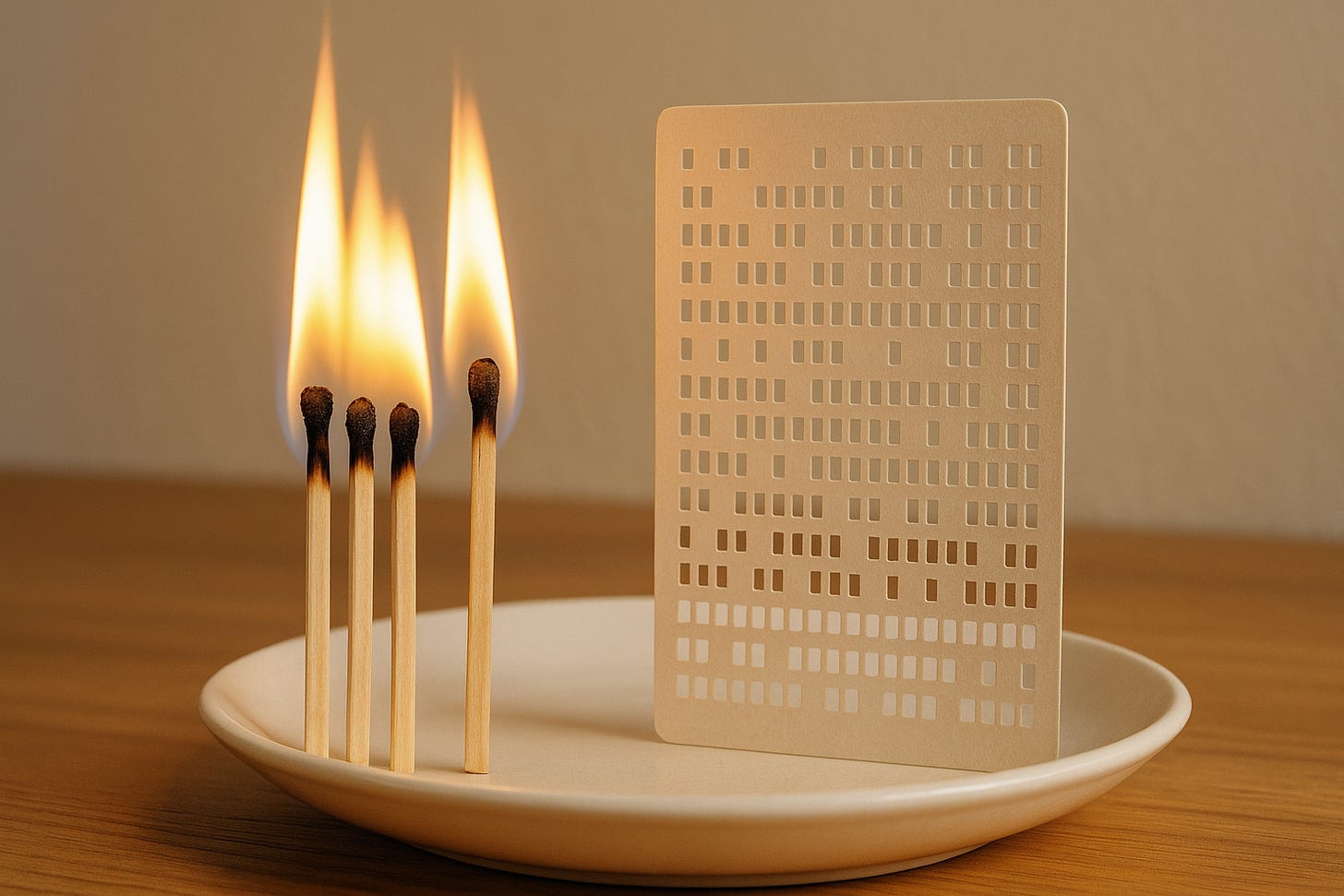

prompt and catch fire

tales from the human in the loop

do what now?

The last time I spent any serious time coding, I was on a professional sabbatical during the most uncertain time of the pandemic in 2020. I’d helped to kick start a community support organization, and we had hundreds of volunteers and dozens of projects looking for volunteer labor.

I had hours to fill.

So I filled them like any basement-dwelling tech tinker would fill them: I taught myself Django and React and built a web app to help volunteers and project leads connect.

To say things have changed a lot since I first typed npx create-react-app frontend five years ago would be a vast understatement, not just because the frameworks and infrastructure have changed but also because the way we approach creating software is undergoing a tectonic shift.

I also bought a house. It’s my first house. And it’s a 110-year-old house. I’d be getting out over my skis just keeping track of expenses and maintenance and goals on any house, but a house that’s stood for over a century commands a special bit of attention. I prefer to outsource as much of my brain as possible, which in this case turned into a convoluted property management workspace in ClickUp that’s barely holding it together.

I hate it, and so I’m building a web app called wellstead to help me simplify my life.

And — stick with me now — I’m doing it mostly by hand.

so many failing tests

Somehow I got test-driven-development stuck in my head as something I really enjoy doing. I got to enjoying it so much that my only open source contribution ever is to a testing library I was using when building the aforementioned web app.

Tonight my goal was to implement some basic styling against what was otherwise a plain text list of service categories—like “plumbing” and “electrical”—to help me see how this most basic seed of a component could eventually grow into a functioning app.

That meant encouraging AI to help me learn how to break stuff and how to create the conditions under which things would break. Reflecting on this exercise now, this is not unlike product development that begs for a falsifiable hypothesis to solution against, but in this case I have no idea how to formulate the hypothesis let alone how to test it.

Claude and I worked on just 10 lines of test code that defined “broken” enough that installing a library and writing 12 lines of component code would fix. All in, less than two dozen lines that nevertheless taught me a lot about a component library, a CSS framework, how to test implementing those things, and how to work with AI effectively to learn about those things.

Based on some weird intuition I developed years ago that I suppose has lived rent free in my head since before we had COVID vaccines, I found myself steering the all-knowing, all-seeing AI toward a partnership rather than either of us running the show. At one moment I would unquestioningly follow Claude’s direction to implement a grid using Tailwind CSS and then the next I’d be pushing back on implementing a TypeScript interface as a premature optimization.

I asked Claude to reflect on the session, which produced what it considered to be the “core insight” of the night:

AI doesn't replace developer judgment - it amplifies it. The most effective AI-assisted learning happens when developers maintain strong opinions about code quality, ask skeptical questions, and treat AI as a research and implementation partner rather than an authority.

this might be stupid

So, I’m learning to code using a tool that might make coding obsolete. But people still tinker with archaic shit all the time, so I suppose it’s not an entirely irrational act. Is analog film still a thing? People still sew their own clothes and bake their own bread.

I guess coding in the age of AI is my sourdough.

As familiar as it feels—having taught myself HTML, CSS, JavaScript, PHP, SQL, Python, and React at various points—learning in this way feels excitingly foreign. Moving from static online tutorials to building something real like wellstead with an AI tutor is kind of exhilarating. Whether it’s exhilarating just because I see lines of code turning into UI or because I feel a little like I’m cheating is something I suppose I need to explore about myself.

But it might also be that it’s exhilarating because this is a glimpse of at least some version of the future of learning. Granted, it takes real work to keep the models firmly grounded in “help me learn” mode versus “do it for me” mode. It’s hardly the perfect tutor, especially since all models still constantly demonstrate glimmers of sycophancy. But as Ethan Mollick says in Co-Intelligence, “"whatever AI you are using right now is going to be the worst AI you will ever use."

Does that mean whatever janky way I’m using AI to learn right now is effectively on hard mode? Maybe. But the process of scratch-making bread is I imagine a part of the fun or if not fun at least the sense of accomplishment.

As I write this in June of 2025, the future of coding is really unclear, which I acknowledge makes this process as an exercise in personal or professional development a bit dubious. Many people believe that the path to obviating manual coding is clear even if we’re not quite there yet (although Geoffrey Huntley certainly makes it look like we’re there). Even still, is there some value in knowing how things work under the hood? I’d like to believe so, but you don’t see me trying to build my own computer processor or RAM in spite of the absurd amount of time I spent using computers every day.

So, yeah, this might be dumb. But I’m curious to see where it leads. If you are, too, follow along.

Maybe we’ll both be surprised.