fill where now?

coding with a pushmi-pullyu

It took me about six hours to get my silly little web app to pull data from a database.

It’s absurd, really.

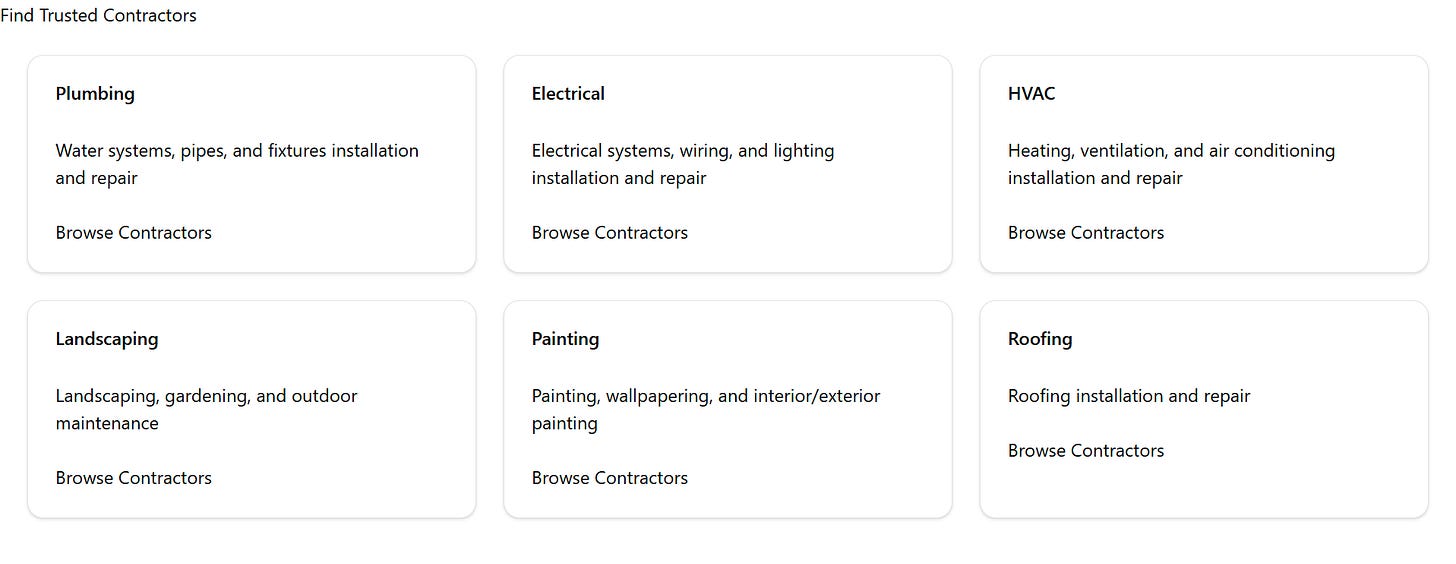

In the time that pure vibe coders are creating multimillion dollar businesses, all I have to show for my work is a handful of featureless cards that just happen to be pulling text from a database.

It’s absurd, but it’s also kind of fulfilling, in a finding-zen-through-building-a-carburetor sort of way.

the level of water

In The Fifth Discipline, Peter Senge lays out this example of a feedback loop in a system that’s stuck with me since I read it seven years ago:

In everyday English when we say, “I am filling the glass of water” we imply, without thinking very much about it, a one-way causality—“I am causing the water level to rise.” … But it would be just as true to describe only the other half of the process: “The level of water in the glass is controlling my hand.”

This perspective is simultaneously both elegant and uncomfortably contrary because the assignment of agency becomes nonobvious. Replace the human hand with any other mechanism for shutting off the water and we might frame the level of water as being in control.

So am I coding with AI, or is the AI coding with me? Who's the level of water and who's the hand?

I don’t have any harebrained notion—yet—that an LLM has me hitched up on marionette strings. Controlling me isn’t worth however many gigawatts of energy Claude is burning as I bumble my way through code. But it is objectively true that I learn more and more independently when the model goes even a little off the rails.

On the one hand I’m guiding the AI to help me learn and on the other the AI is guiding me toward learning. The push-and-pull is like a dialectic leading us both closer to the truth, at least until the model hits its context window limit and forgets why it exists.

environmental disaster

For example, let’s examine a completely solved problem that would be trivial for an experienced software engineer but for an amateur like me is roughly equivalent to landing a rover on the moon: environment variables.

Environment variables live outside your code so sensitive or environment-specific values—like database keys—have more controlled access and can easily flip between testing and production.

This is one of those things that’s foundationally trivial. But I couldn’t get it to work in a local test environment, which is exactly where you’d expect it to be simple and just work.

Fortunately, Claude had the answers. Unfortunately, they were all the wrong answers.

The thing is that the answer exists in documentation. I found it using what is quickly becoming the anachronism of searching web pages on the internet using Google. And that begs the question: if I could find the answer using Google, why didn’t the oracle that is Claude have it at the ready or at least conduct the search as well as I did to figure it out?

You might speculate—and you might be right—that this is just one of those quirks along the jagged frontier that AI isn’t good at. But I think the reason is far less flattering: AI just doesn’t assume that I’m as ignorant as I am when it comes to these sorts of fundamentals.

Somewhere, in whatever passes for Claude’s unconscious, it’s considering the probability that I’m dumb enough not to understand how to set up environment variables for testing, and it lost the bet. Simple as that.

help me help myself

It’s surprises me how common these fundamental failures are in my sessions trying to learn with AI. They’re common enough that I’m even more awestruck by the legitimate software engineers who are literally deploying dozens of autonomous agents to write code soup-to-nuts. There’s something almost mystical about whatever context and reinforcement they’ve figured out to provide to the models to get them hum without running into these failures on their own.

Is this the new fate of novice engineers, to work with intelligent machines that fail to recognize how dumb their human collaborators are at the task at hand?

It’s too soon to tell, but I have to wonder how much I’m learning and probably more importantly what I’m learning. Am I actually learning to code? Have I put in enough reps that I’ve started to establish any kind of muscle memory that would help me engineer something unaided? Or am I just learning how to help AI help me help myself?

Sounds like another experiment in the making.