confidence game

what to do when your model outruns its clock

the setup

On July 14, 2025 I started building the contractor list within wellstead. In another timeline in which AI were less prolific, completing the work would have been a weekend, two at the most. It would have meant copying the patterns of the service category list I’d just finished and fumbling a bit on the dynamic routes that power displaying contractors by category.

Instead, it took me nearly eight weeks of sporadic effort to ship something that a child using Lovable would be embarrassed to claim as their own.

See for yourself:

This is the moment when I expect someone who keeps reading these posts to wonder—and I mean really ponder—“What is this guy doing to himself and why doesn’t he stop?”

There’s a version of this story where the moral is that, when a billion things that were hard yesterday are easy today, then it’s easy to get distracted by opportunity. That 100% happened, with days and sometimes full weeks between coding sessions that might deliver dozens of lines of code representing a minuscule chunk of work.

But this is not that version of the story. This version of the story is about how my skepticism at the universal quality of AI output finally bit me in the ass and sent me down a weird rabbit hole that helped me understand at least a little how people can get so taken up in models reinforcing their beliefs.

the tell

Be warned that this doesn’t work without getting at least a little bit technical.

I’ve written before that I learn really well by following test-driven development, meaning that I write tests that fail before I write any application code. This helps me understand and get a feel for the shape of the app as represented by code. It helps me learn the architecture and how data works its way through the bowels of a series of scripts. It also pays off later when I inevitably break something and the test suite lets me know that something’s gone horribly wrong.

As I was building this list of contractors (of course heavily guided by Claude via Cursor), the pattern felt off. One might say it had a smell. I couldn’t have articulated what made it seem that way, but the way the code was emerging out of my collaboration with AI—in spite of it working perfectly fine—just sort of gave me the heebie-jeebies.

Being fully aware of my being a poster child for the Dunning-Kruger effect at the moment, I continued to let the weird pattern unfold, but then the testing patterns started to get weird, too. I couldn’t shake the feeling that I’d let AI guide me toward doing something wrong and that I just didn’t know enough to be able to figure out on my own how to figure out the cause of the cognitive dissonance.

There’s this lovely turn of phrase when working with programming languages that describes some pattern or way of implementing something that one would describe as idiomatic. Knowing this actually opens up interesting semantic search avenues because you can inquire not just about code using a framework like Next.js but about idiomatic Next.js code.

Acknowledging that I am by no means fluent in any of the frameworks or languages that I’m using to write code, what I’d written felt decidedly un-idiomatic, like if I had said to someone “let’s make sure all our ducks are on the same page” or “we’ll burn that bridge when we come to it”.

So let’s suffice it to say that I had this ineffable sense that something was wrong but no lived experience to guide me in determining whether anything was wrong, which is exactly when one of the aforementioned distractions stirred the pot: I decided to give OpenAI’s Codex a chance to roast my code or let me off the hook once and for all.

Whoops.

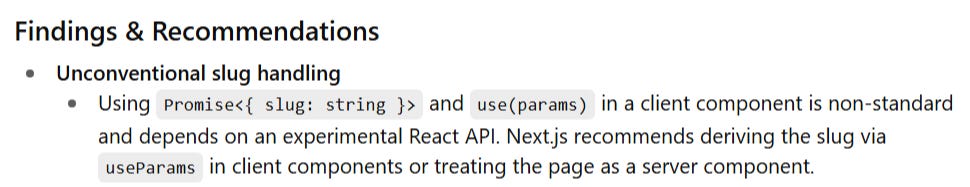

Codex called my implementation “non-standard” and claimed that I was using an “experimental” feature. It made the pattern-matching part of my brain leap up from its seat and shout “SEE?!” in gleeful vindication. It was like I had actually been learning, after all, and developing some intuition for the language through repetition. Everything was going to plan!

Except Codex was wrong. My implementation is as of now quite standard and the feature it referenced is no longer experimental. Codex would have been right in 2024, but it was wrong now.

The pattern was, in fact, idiomatic for the framework as it has evolved.

It was fine the whole time.

the blow-off

Now, to give myself some grace, the pattern was new (to me) and sort of a shift from any React code I’d ever written in the past. I was picking up on a real difference between modern Next.js and my comfort zone.

The valuable lesson here—the moral of this version of the story—is that sometimes knowing that the jagged frontier of AI capabilities exists can be a double-edged sword. On the one hand, you’re equipped with a healthy dose of skepticism about everything in which you’re not an authority; on the other hand, you lack the authority to identify when inevitably some weird thing is actually the right thing.

OpenAI recently shared a great write-up about why language models hallucinate. You should read it, but in short we’ve trained models to be great guessers and to guess even when guessing isn’t in the best interest of the human on the other side of the chat. It’s like an unwitting but insidious long con that pulls the rug out from under you when you least expect it.

That doesn’t detract from the many things at which AI excels, but considering and reconsidering this con game for as long as it exists is something we all must adopt as a standard when relying on it for anything beyond entertainment.

In this case, I probably would have been better off and more effective as a human using a keyboard by referencing static, deterministic documentation that defined very clearly the pattern I should use. But the documentation can’t adapt in real-time to my level or my problem. It can’t guide me kinesthetically toward a solution.

For now, that’s a capability reserved for fallible agents, humans and AI.