AI by default #3

comic book values, home improvement, and tree care

ChatGPT is three years old today. It now has around 800 million users worldwide, and it created an entire category of technology—at least from a product marketing perspective—that continues to develop at a pace that seems insane.

Its ubiquity makes generative AI seem mundane. It’s easy to lose sight of the fact that the vast majority of people simply could not create an image from a text prompt in 2022 and the concept of vibe coding anything would have seemed delusional.

To put our current trajectory into perspective, Google Maps on mobile, Instagram, and Uber didn’t even come onto the scene until after the iPhone’s third birthday, but we already have what seem like generation-defining, viable companies (Cursor, Gamma, Lovable) that have grown up in about half the time.

In some circles, “AI by default” is gaining traction as a posture. When I realized how long it’d been since I’d written one of these, it occurred to me that reaching for AI to solve problems has started to seem mundane, the use cases almost trivial. It’s becoming routine for me to ask, “how can I get AI to do most of this instead of me?”

So here are a handful of ways I’ve worked with AI to lighten my day-to-day cognitive load.

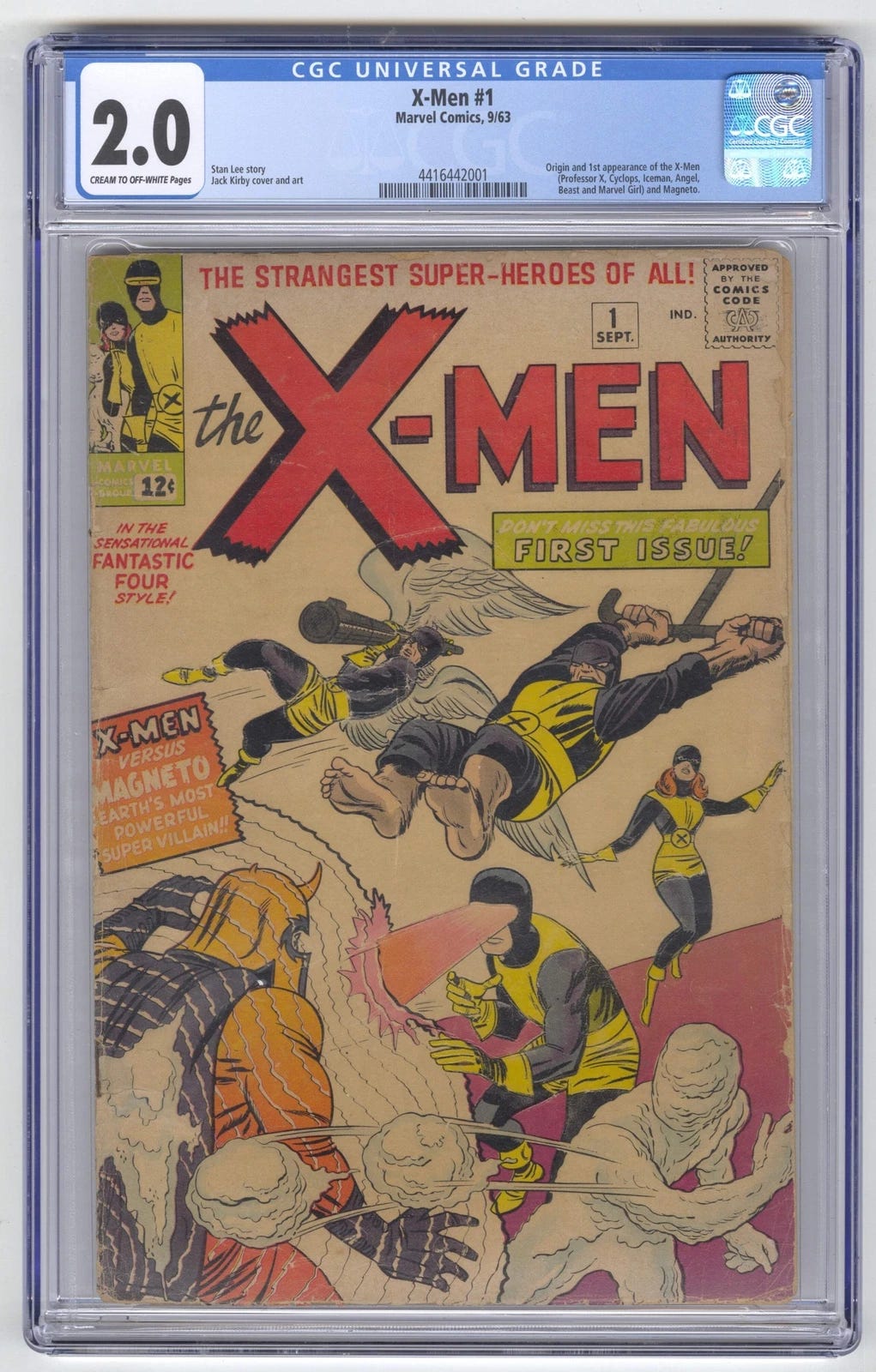

comparing comic book valuations

I have a physical comic book collection spanning the mid- to late-90s, with around 350 individual comic books. For years they’ve been haphazardly tucked away in cardboard boxes and taking up closet space. And, like all good clutter, they occupied mental space at the same time, gently but persistently reminding me that they should be stored better and catalogued.

The process of transferring them to better storage and taking stock of them is something that at least for now sits squarely in human-labor territory, but I took the opportunity to price them at the same time.

Something I discovered in the process is that there’s a difference between the value of a raw comic book (a comic book that’s just stored in a plastic bag) and a slabbed comic book (a comic book that’s been professionally graded and encased in hard plastic).

The weird thing about collectibles is that a single transaction at auction or on eBay can determine their value, and so there’s no reliable formula that says “this comic book will be x% more valuable if I get it slabbed, and so it makes economic sense if the grading costs $y or less.”

For my collection of 350 comic books, this means I have to look at the fair market value of each book individually to figure out if there’s an objective economic incentive to getting it slabbed.

the human way

You can imagine the rote administrative overhead that could accomplish this task. With some sort of spreadsheet listing all of my comic books, I could painstakingly record the raw and slabbed value for each of them and apply some simple arithmetic to figure out if slabbing makes raw economic sense.

With the process entailing several clicks, visual scanning, and typing, going through one item per minute seems like a reasonable average, anticipating that any flow state that went faster would inevitably get thrown off by an error or something that didn’t make sense.

So this would be a great task for me as a human if I had around 6 hours to kill doing mindless data entry.

AI by default

For a little while I thought I was going to be saddled with this 6-hour task. I had high hopes for a couple of tools that just couldn’t get the job done, and then my stubbornness led me to one that finally did.

ChatGPT Agent Mode

This is the perfect sort of task for a computer-using agent. I spun it up with its mission defined and perhaps too willingly used my login credentials to get it into the apps I was using for comic book inventorying and pricing.

It worked for 15 minutes on its own and came back with its recommendations … for ten comics. And it very clearly thought it was done.

Undeterred, I finagled it to work on its own for another 10 minutes, and it came back with recommendations for just three comics.

Apparently ChatGPT doesn’t want to do this kind of work any more than I do.

Probably this task collided with something in its system instructions telling it not to support long-running sessions, so I shelved it and moved on.

Claude Chrome Extension

Anthropic recently opened up access to its Claude Chrome extension, currently in beta, and this seemed like the perfect thing to set an LLM in my browser to work at. The extension creates its own tab groups to which it restricts its activity, and it seemed like it could work more or less autonomously while I did other things in the browser.

Unfortunately, it fell prey to the same sort of baked-in laziness as ChatGPT’s Agent mode in that I couldn’t coerce it to work for more than 10 minutes without its feedback: “This is going to take hours. Are you sure you want to do this?”

And I was sure that I wanted AI to do it, but without it talking back.

Claude Code + Chrome DevTools MCP

This sounds a lot more technical than it is.

Remember that last time I wrote one of these I’d been using Claude Code more and more for things that have nothing to do with code.

With DevTools MCP, Claude Code can work within a browser autonomously in all of the same ways a human developer might use different browser tools to debug a web application. This means that with this set of tools it can browse, click on things, and even run its own scripts.

With this set of tools, Claude Code worked for 28 hours straight and meticulously evaluated all of the comic books in my inventory. As far as I can tell it did this in a way that was consistent and deterministic with zero hallucinations because the AI part was just it controlling the browser.

The most incredible thing about how this worked was that Claude Code came up with a plan to scaffold the work up for itself, keep track of its progress, and set itself up so that when it inevitably ran out of context it knew exactly how to pick back up after it automatically compacted its context window.

This escalation in capability—that Claude Code can successfully run autonomously at a task for 28 hours with zero human intervention and succeed—makes economic sense in that there’s tremendous demand for AI agents that can run for hours or days to generate code, but it hadn’t occurred to me until after seeing this unfold that long-running tasks that weren’t generating code could emerge from the same pattern.

For any repetitive digital task, we all need to reach for this tooling first, especially when hours of our time are at stake.

parsing an inspection report

I recently got a home energy audit for my 1913 home (spoiler: it leaks like a sieve).

The report is about 20 pages long and contains a mix of findings, some better served by an insulation specialist and some that just need some smaller point solutions. I kept procrastinating on reviewing the audit to find relatively small audit findings that a handyman could address.

the human way

The way I would have handled this unaugmented by AI would have been to spin up a spreadsheet, outline each of the findings, and take my best guess at what I might expect a handyman to do. I’d probably have been wrong a lot because I don’t have much intuition for what a generalist can handle versus what needs a specialist.

AI by default

Instead, I was pleased to find that LLMs have gotten really good at reading and synthesizing PDFs. Because I wanted a more authoritative answer about something about which I myself am unsure—i.e., what I could expect a handyman to do—I ran the inspection through ChatGPT, Claude, and Gemini.

Not only did they all come back with a similar list of findings for me to present to a handyman, but they also provided me with some high-level cost estimates based on hourly rates and materials. The estimates diverged between the three models based on how they thought a handyman might address the findings, but this at least gives me a basis for evaluating an estimate.

when to water a tree

Over the summer, I planted a young persimmon tree in my back yard.

I have not as far as I can recall ever purchased and planted a tree, nor have I ever been solely responsible for the health and wellbeing of any plant.

Apparently one of the ways to kill a newly planted tree is to screw up with water, but the watering advice for new trees follows Schrödinger’s playbook in that it seems you can’t know whether you’re starving your tree of water or drowning its root ball until it comes out of the other end of winter alive or dead.

the human way

The human way is reading all the things. I read all the things, and I was still confused.

AI by default

After feeding ChatGPT several independent sources, it helped me sift through what seemed to be conflicting instructions and gave me a pretty simple if-then playbook for watering the tree.

Using AI to help me take care of a tree admittedly isn’t terrifically interesting, but I found myself escalating AI’s involvement over time.

At first, I just asked for instructions, which I followed.

Later, it became interactive and real-time; I took a picture of a soil-covered spade and let it react to the moistness of the soil.

Most recently, as our conversation became more and more formulaic, I realized that I could use AI to automate some of the AI bits, ultimately asking ChatGPT to create a recurring task for itself to check the prior week’s precipitation and weather and proactively give me advice for watering.

The dynamic evolved from “here’s the information I need you to synthesize” to “here are the conditions right now I need feedback on” to “run this on autopilot like an assistant”.

The first check hasn’t triggered yet, but I’m eager to see how it goes.

In just the few months that have passed since I wrote the last AI by default, what’s just now possible has shifted dramatically. It’s becoming easier and easier to fold AI into day-to-day responsibilities in a way that’s more productive than experimental because it requires less tinkering than designing around its quirks.

And the more I let AI into my day-to-day, the more I’m able to recognize the systems and support structures that I can put into place (also with AI’s help!) to make it run more smoothly and with less manual intervention.

Extrapolating another handful of months, it seems reasonable to expect that the human in the loop will be less about orchestration and more about applying judgment and taste.

… until we make agents for those, too.